From Instructions to Intentions: The Next Programming Paradigm

The Rise of Intent: A Manifesto for Next-Generation Software Development

I’m Siddhesh, a Microsoft Certified Trainer, cloud architect, and AI practitioner focused on helping developers and organizations adopt AI effectively. As a Pluralsight instructor and speaker, I design and deliver hands-on AI enablement programs covering Generative AI, Agentic AI, Azure AI, and modern cloud architectures.

With a strong foundation in Microsoft .NET and Azure, my work today centers on building real-world AI solutions, agentic workflows, and developer productivity using AI-assisted tools. I share practical insights through workshops, conference talks, online courses, blogs, newsletters, and YouTube—bridging the gap between AI concepts and production-ready implementations.

"Programs must be written for people to read, and only incidentally for machines to execute." — Harold Abelson & Gerald Jay Sussman, Structure and Interpretation of Computer Programs

This essay started as a conversation, not a thesis.

My friend Zia and I had been going deep on a question that sounds deceptively simple: what do AI tools actually do in programming and software engineering? Are they clever autocomplete? Are they a new kind of collaborator? Are they replacing the programmer, augmenting them, or fundamentally changing what the job even is?

We kept circling back to history. Because if you want to understand whether something is genuinely new, you have to understand what came before — not just the tools, but the ideas. The people who shaped how we think about computation in the first place.

Somewhere in that conversation, Zia proposed something that I haven't been able to stop thinking about since. He suggested that what we are witnessing is not just a new tool or a new workflow, but the emergence of a new programming paradigm — one that deserves its own name: Intent-Oriented Programming.

That lineage runs through something most working engineers have touched but few have named as a philosophical moment: specification-driven development — the quiet, decade-long rehearsal that made Zia's proposal possible.

This essay is my attempt to trace that lineage, to understand why Zia's proposal might be right, and to think honestly about what it means.

Part One: The Founding Fathers of Thought

Before there were programs, there were ideas about what programs could even be. Three figures loom largest in that prehistory, and understanding them is essential for grasping what is genuinely radical about Zia's proposal.

Alonzo Church — Computation as Transformation

In 1936, a Princeton mathematician named Alonzo Church published a formal system for expressing computation using only functions and their applications. He called it the lambda calculus. No loops. No mutable variables. No state. Just functions taking inputs and returning outputs, composed together in arbitrarily complex ways.

Church was trying to answer a fundamental question — what does it mean to compute something? — and his answer was radical: computation is transformation. You take a value, apply a rule, and get a new value. Everything else is notation.

The lambda calculus is the theoretical bedrock of functional programming. Haskell, Lisp, Erlang, and much of what drives modern language design trace their intellectual lineage directly to Church's 1936 paper. When you use map, filter, or reduce in Python, you are thinking, in some small way, the way Alonzo Church thought.

What would Church make of AI agents?

It is tempting to imagine him horrified — all that non-determinism, all those side effects, all that stochastic messiness. But I think he would be more interested than appalled. The lambda calculus was, at its heart, a system for expressing transformations without specifying the mechanism. You declare what the transformation is; the system figures out how to apply it. That is, in spirit, exactly what intent-oriented systems do. Church might see in large language models an impure but fascinatingly powerful realisation of something he glimpsed theoretically: computation that operates on meaning rather than symbols alone.

Edsger W. Dijkstra — Programming as Proof

If Church gave us the theory, Edsger W. Dijkstra gave us the conscience.

Dijkstra was Dutch, combative, deeply principled, and constitutionally incapable of tolerating sloppiness. He is best known for two things: the shortest-path algorithm that bears his name, and a lifelong campaign for what he called the discipline of programming — the idea that code should be proven correct, not merely tested until it seems okay.

He is the one who declared that the GOTO statement should be considered harmful, in a 1968 letter that changed how an entire generation thought about program structure. He championed structured programming — clear, composable control flow, not arbitrary jumps. He argued that programs should be built from their correctness proofs, not the other way around. You should know why a program works before you run it.

Dijkstra was famously withering about testing as a substitute for reasoning:

"Program testing can be used to show the presence of bugs, but never to show their absence."

He believed software engineering was, at its core, a mathematical discipline — and that the failure to treat it as such was the root cause of nearly every software disaster.

What would Dijkstra make of AI agents?

Almost certainly appalled — and he would have had a point. The AI approach to software is fundamentally empirical where Dijkstra wanted deductive. You run the code, see if it works, iterate. You test against examples. You accept that the system might sometimes be wrong. These are precisely the habits of mind Dijkstra spent his career railing against.

But here is the tension: his deepest goal was to close the gap between what a programmer intended and what the code did. Intent-Oriented Programming attempts to close that gap differently — by making intent itself the specification, and delegating verification to automated systems. He might find the outputs interesting while remaining appalled by the process. That sounds about right for Dijkstra.

Alan Kay — Programming as Medium

Alan Kay is the third figure, and perhaps the most visionary. Kay, working at Xerox PARC in the 1970s, did not invent object-oriented programming in any strict sense — Simula came earlier — but he gave it its modern philosophy and ambition.

Kay's insight was that the computer was, above all else, a medium for thought. Not a calculator, not a business machine — a medium, like paper or paint, through which humans could express and explore ideas. His creation, Smalltalk, was a language where everything is an object, where objects communicate by sending messages, and where the programmer models the world rather than describes procedures.

Object-oriented programming, in Kay's view, was not primarily about code reuse or encapsulation. It was about expressiveness — giving programmers a language whose structure matched the structure of the problems they were solving.

Kay has spent decades arguing that we are not nearly ambitious enough about computing. He has consistently maintained that the personal computer revolution barely scratched the surface — that truly powerful computing should feel like learning to read and write, transformative in a civilisational sense.

What would Alan Kay make of AI agents?

Of all three figures, Kay is the most likely to be genuinely excited — and the most likely to think we are still thinking too small. He would probably note, with characteristic impatience, that we have built very powerful autocomplete and called it intelligence. But he would also see in natural-language interfaces the seed of something he has always wanted: computing truly accessible to anyone with an idea. His question would not be whether AI can write code, but whether it can help people think. Whether it expands the range of who gets to be a computational thinker.

That has always been Kay's deepest question. Zia's paradigm offers his most compelling answer yet.

Part Two: The Long Arc of Abstraction

With those three figures as anchors, the history of programming paradigms becomes a coherent story rather than a series of disconnected fashions. Each shift is not just a new way to write code — it is a new way to think about computation. And each one moves in the same direction.

1940s: Machine Code — Speaking the Machine's Language

The earliest programmers had no abstraction at all. They wrote binary instructions directly — specific patterns of ones and zeros mapping to specific processor operations. The mental model required was that of the machine itself: registers, memory addresses, instruction cycles.

This was enormously powerful and completely inaccessible to anyone who had not spent years learning a specific architecture. Knowledge did not transfer. Humanity did not transfer. You were, in a very real sense, thinking like a circuit.

1950s: Assembly — A First Layer of Humanity

Assembly gave programmers human-readable mnemonics: MOV, ADD, JMP. Each instruction still mapped one-to-one to a machine operation, but you could at least read code aloud and have it sound vaguely like instructions. The assembler did the mechanical work of translating.

This is the first instance of a pattern that would repeat many times: humans offload mechanical translation to a program, freeing themselves to think at a higher level. The assembler was, in a sense, the first coding assistant.

1960s–70s: Structured Programming — The Dijkstra Revolution

Structured programming replaced arbitrary jumps and gotos with disciplined control flow: if/then/else, for, while, functions. Languages like C, Pascal, and ALGOL made programs readable in a way they never had been.

The mental model shifted from "instructions for a machine" to "a logical argument expressed in code." You could read a well-structured program and follow its reasoning.

This is when programming became a profession in the full sense. The skills were teachable, the concepts transferable, the output legible to anyone trained in the discipline.

1970s–80s: Object-Oriented Programming — The Kay Revolution

Object-oriented programming made the programmer a modeller rather than a sequencer. Instead of describing procedures, you described entities — their properties, their behaviours, the messages they exchange.

The mental model: the program is a society of interacting objects. Understanding a system means understanding its inhabitants and their relationships, not the precise instruction sequence that will execute.

This shift made very large systems manageable and made collaboration possible at scale. It is still the dominant paradigm in most professional software development.

2000s–2020s: Specification-Driven Development — The Rehearsal Nobody Named

Somewhere in this period, a quieter revolution was building — one that did not arrive with a manifesto or a famous letter, but accumulated gradually across tools, frameworks, and practices that each pointed in the same direction.

Behaviour-Driven Development (BDD), introduced by Dan North in 2006, was one of the clearest expressions of it. BDD asked teams to write tests before code — but not in programming syntax. In plain English. Structured, yes, but readable by anyone:

Given a logged-in user

When they submit the checkout form

Then a payment should be processed

And a confirmation email should be sent

The specification was the test. The specification was, in some sense, the program. The code that followed was an implementation detail.

GraphQL (2015) made the same move at the data layer. Instead of writing queries that described how to join tables, you declared the shape of the data you wanted. The execution engine figured out the rest.

Terraform and infrastructure-as-code did it for systems. You wrote a description of the infrastructure you wanted to exist — servers, networks, permissions — and the tool reconciled the current state of the world with your specification. You were not scripting actions; you were declaring outcomes.

Advanced type systems — in Haskell, Rust, TypeScript, and others — took it further still. A sufficiently expressive type signature is a machine-checkable specification. When you encode your business rules in types, you are not writing comments or documentation. You are writing a formal description of what is and is not permitted, and letting the compiler enforce it. Making illegal states literally unrepresentable.

What unites all of these is a single principle: the distance between the human's description of what they want and the system's execution of it should be as small as possible. The programmer's job is to specify; the tooling's job is to satisfy.

This is specification-driven development. It was not called a paradigm, and perhaps that is why it has been underestimated. But in retrospect, it was the dress rehearsal for everything that follows.

In specification-driven development, the human describes; the toolchain executes. The gap narrows — but the human is still writing in formal notation. Intent-Oriented Programming removes that last constraint.

Dijkstra would have admired the rigour of type-driven development — it is the closest practical programming has come to his vision of programs proven correct before they run. Church would have recognised BDD's declarative structure as a cousin of the lambda calculus: express the transformation, not the mechanism. Kay would have appreciated that specification languages were getting closer to natural thought — but would have noted, impatiently, that they were still formal languages. Still notation.

That impatience is precisely what Zia's proposal answers.

Part Three: What AI Tools Actually Do

This is the heart of what Zia and I kept returning to. Strip away the hype, the breathless announcements, the fears — what do AI tools actually do in the practice of software engineering?

The answer is more interesting than either optimists or pessimists usually acknowledge.

What They Do Well

AI tools are compression engines for tacit knowledge. When you ask an AI assistant to write a debounce function, scaffold a Next.js project, or write a regex for email validation — you are not asking it to think. You are asking it to recall, pattern-match, and assemble. It does this at a scale and speed no human could match, drawing on the accumulated written knowledge of the entire software engineering profession.

For this category of work — boilerplate, scaffolding, standard patterns — AI tools are genuinely transformative. Work that used to take an hour takes two minutes.

They are remarkably good at translation. They bridge gaps between a vague description and a plausible implementation, between a codebase in Python and one in Go, between undocumented code and readable comments. In each case, they are doing the same thing: closing the gap between a human expression and a formal computational one. This is, in other words, what specification-driven tooling was already doing — but with natural language as the interface instead of Gherkin or a type signature.

They function as thinking partners. Not oracles — they are wrong often enough that uncritical trust is dangerous — but as rubber ducks with opinions. Explaining your problem to an AI assistant, getting a response that misses the point, and then articulating why it missed the point is often enough to unlock the solution yourself. The Socratic function, it turns out, does not require genuine understanding to be useful.

What They Do Not Do

AI tools do not understand your problem in any deep sense. They do not know that your authentication system is part of a healthcare product with regulatory constraints. They do not know that your "simple refactor" is happening the week before a major launch. They do not know the history of the codebase, the political dynamics of the team, or the technical debt accumulated three years ago that must now be carefully navigated.

Context that is not written down is invisible to them.

This is the first and most important limitation. Most of the important knowledge in software engineering is never written down. It lives in heads, in habits, in the careful judgments engineers make a hundred times a day without articulating them. This was true of BDD specifications too — a Gherkin scenario only captures what someone thought to write down. AI amplifies that problem rather than solves it.

AI tools also do not have aesthetic judgment in any meaningful sense. They produce code that works. They rarely produce code that is beautiful — structured in a way that will be easy to extend, easy to read, easy to reason about six months from now. The craft of software engineering remains stubbornly human.

And AI tools do not tell you what to build. They help you build what you ask for. Whether what you are asking for is the right thing — whether it solves the actual problem, serves the actual users, creates the actual value — is entirely outside their scope.

The New Shape of the Work

What changes is the distribution of where human attention goes.

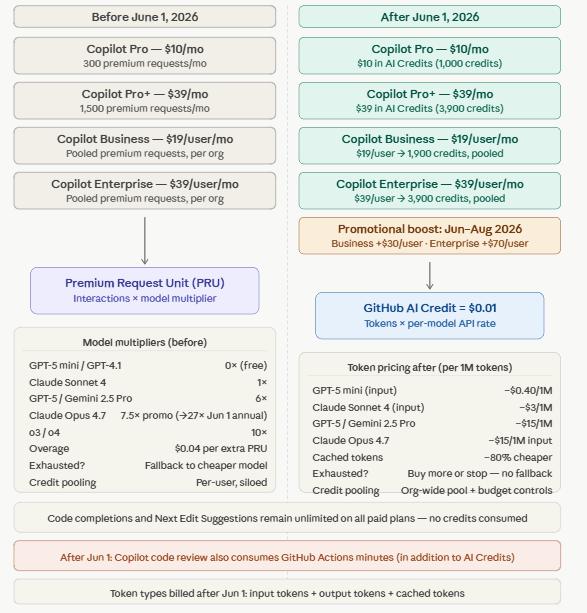

Red: where most human energy used to go. Green: where it goes in the new model.

In traditional software engineering, a significant portion of a programmer's time was spent on mechanical translation — turning a mental model of desired behaviour into correct, syntactically valid, working code. Debugging syntax errors. Looking up API signatures. Writing boilerplate.

AI tools absorb much of that. The programmer's attention shifts upstream and downstream. Upstream: more time thinking about what to build and why, designing the system's shape before any code is written. Downstream: more time evaluating the output, reading generated code critically, catching subtle errors that come not from syntax but from misunderstood requirements.

The job does not disappear. It shifts. And the skills that matter shift with it.

Part Four: Zia's Proposal — Intent-Oriented Programming

All of which builds to the insight at the heart of this essay — the one that emerged from Zia's thinking and that I haven't been able to get out of my head since he articulated it.

We are at the beginning of a genuine paradigm shift. And Zia's name for it is exactly right: Intent-Oriented Programming.

The Proposal

In every previous paradigm, the fundamental act of programming was translation. You took a human intention — a desired behaviour, a business rule, a computational goal — and expressed it in increasingly formal terms until it became something a machine could execute. The programmer was the translator.

Specification-driven development narrowed that gap dramatically. BDD got close enough that a non-programmer could write a test. A type system got close enough that a domain expert could read the constraints. But the notation was still formal. The last mile was still a cliff.

In Intent-Oriented Programming, the programmer does not translate. They express the intent directly — in something close to natural language — and the system handles the translation entirely.

This is not merely a better interface to the old paradigms. It is a different relationship between the human and the computation. The code is no longer the product. It is the byproduct. The thing being designed is the behaviour. The thing being built is the outcome.

Specification-driven development was the crucial bridge. It proved, in practice, that the specification could be the primary artifact — that code was already becoming a downstream concern. BDD showed that English sentences could drive test suites. Type systems showed that formal descriptions of intent could catch errors before execution. Terraform showed that declaring desired state was superior to scripting transitions. Each of these was IOP with the last translation step still intact. What AI agents remove is that final step — the one where a human still had to express intent in formal notation. The notation dissolves. The intent remains.

What This Inherits from the Founding Figures

The proposal does not come from nowhere. It is the natural conclusion of a lineage.

Church built a system for expressing transformations without specifying the mechanism — declare the what, the system handles the how. Zia's paradigm takes that principle from mathematical functions and applies it to entire software systems.

Dijkstra's deepest concern — beneath all the rigour and combativeness — was the gap between what a programmer intended and what the code did. Specification-driven development was the first serious practical attempt to close it. Intent-Oriented Programming closes it further still: by making the intent itself the specification, in natural language rather than formal notation. The goal is the same; the mechanism is radically different.

Kay wanted computing to be a medium accessible to anyone with an idea, not just trained engineers. Specification-driven development got closer — BDD let business analysts write tests, Terraform let infrastructure teams declare systems. But they still required learning a notation. Intent-Oriented Programming removes that last barrier. The wall between having an idea and building it is lower than it has ever been.

The Core Skills of the New Paradigm

The skills that define a good practitioner of Intent-Oriented Programming are not the same as those of a good traditional programmer — though the overlap is significant.

Specification precision becomes central. Vague intentions produce vague results. The ability to describe what you want precisely enough for an AI to interpret correctly — including constraints, edge cases, and explicit what-not-to-dos — is the new fundamental skill. It is, in some sense, a return to Dijkstra's insistence on rigour and BDD's discipline of writing acceptance criteria before writing code, but now expressed in natural language rather than formal notation.

Critical evaluation of outputs becomes essential. The AI generates; the human judges. Reading code you did not write, assessing whether it actually does what you intended, catching subtle misinterpretations that look like they work but quietly don't — this requires deep technical knowledge. The programmer who cannot read is not safe in a world where they are no longer writing.

Systems thinking becomes more important, not less. When individual functions are cheap to generate, the value of the human is in understanding how they fit together — the architecture, the data flows, the failure modes, the evolution paths. This is the skill that specification-driven development already demanded of its best practitioners.

Ethical and contextual judgment cannot be delegated at all. The AI does not know what should be built. It does not know who will be affected, what the risks are, what the downstream consequences might be. That knowledge is irreducibly human.

Concerns Worth Sitting With

Zia's paradigm is not a clean win. There are real costs that deserve honest acknowledgment.

Legibility risk. When code is generated, the chain of accountability is murkier. A programmer who did not write a piece of code may not understand it well enough to debug it when it breaks. Generated code tends to be correct but not beautiful — functional but opaque. The profession will need new practices for understanding and maintaining code that nobody authored. Specification-driven development had an early version of this problem — Gherkin scenarios could drift from the actual code they were meant to describe. With AI generation, the drift can be deeper and harder to detect.

Specification errors as the new bug class. Syntax errors nearly disappear. In their place comes a subtler and more dangerous failure mode: the system that does precisely what you asked, but not what you wanted. These bugs are hard to detect because the code is correct; the problem lies in the gap between specification and true intention. This is Dijkstra's nightmare, wearing a new costume. BDD practitioners already knew this problem — a scenario can be green and still be wrong, if it specifies the wrong thing. IOP amplifies the surface area.

The tacit knowledge problem. As fewer programmers engage in the mechanical work of writing code, fewer develop the intuitions that come from that work. The deep understanding of how systems behave, what makes them fail, what makes them scale — much of that is learned by writing a lot of code and watching it break. If the code is generated, that learning path is disrupted. We do not yet know what replaces it.

Concentration of capability. If building sophisticated software becomes dependent on access to powerful AI systems controlled by a small number of organisations, the democratisation story has a dark underside. The barrier to entry falls — but falls onto a foundation controlled by others.

The Spectrum, Not the Switch

It would be wrong to present this as a binary. Intent-Oriented Programming is a direction, not a destination. Specification-driven development does not disappear — it becomes a layer within the new paradigm, available when precision demands it.

High-stakes systems — medical software, aviation control, financial infrastructure — will remain closer to the implementation end of the spectrum for a long time, likely anchored in specification-driven approaches where formal verification is still possible. The cost of a misunderstood specification is too high, and formal methods will need to mature considerably before pure intent can be trusted without rigorous checking.

Exploratory work, prototyping, and creative projects are already living in fully intent-oriented territory. The vast middle of professional software engineering is in transition, drifting rightward through the specification-driven zone and into the agentic one, and will be for a decade or more.

Part Five: What This Means

The question Zia started with — what do AI tools actually do in programming? — has an answer that is more modest and more profound than either the hype or the fear suggests.

They are very good at mechanical translation. They absorb the rote work, the pattern matching, the boilerplate generation. They make the gap between intent and implementation smaller, faster, and cheaper to cross — continuing the work that specification-driven development began, but removing the requirement for formal notation entirely.

They do not replace judgment. They do not provide context. They do not tell you what to build or why it matters. They do not hold the ethical weight of the choices embedded in every system.

What they do is change the shape of the work — and that, over time, changes the shape of the profession, the shape of who can participate in it, and the shape of what it means to build software at all.

Every paradigm shift in the history of programming has done exactly this. Machine code gave way to assembly, which gave way to structured programming, which gave way to object-orientation, which gave way to specification-driven development, which is now giving way to intent-oriented programming. Each shift reduced the mechanical friction between human intent and computational outcome. Each shift changed who could participate.

Church understood computation as transformation. Dijkstra understood it as argument. Kay understood it as medium. The specification-driven era understood it as declaration. The synthesis Zia names — computation as dialogue, between human intent and machine execution, conducted in natural language — is the territory we are entering.

It is early. The systems are imperfect. The risks are real. The professional norms, the legal frameworks, the educational curricula — none of them have caught up yet.

But the direction is clear. And naming it, as Zia did in a single, clear-eyed observation halfway through a long and wandering conversation, is the first step toward navigating it wisely.

This essay is the direct result of a deep conversation with my friend Zia. He was the one who proposed Intent-Oriented Programming as a distinct paradigm — the idea that we have crossed not just a tooling threshold but a conceptual one, that intent and outcome are becoming the primary interface between humans and computation. I am grateful for his thinking, and I hope this essay does justice to it. The elaborations, the historical detours, and any errors are entirely my own.

Further Reading

Alonzo Church, "An Unsolvable Problem of Elementary Number Theory" (1936)

Edsger W. Dijkstra, "Go To Statement Considered Harmful" (1968)

Alan Kay, "The Computer Revolution Hasn't Happened Yet" — OOPSLA '97 keynote

Harold Abelson & Gerald Sussman, Structure and Interpretation of Computer Programs (1996)

Fred Brooks, The Mythical Man-Month — still the most honest book about software engineering

Dan North, "Introducing BDD" (2006) — the essay that made specifications executable

Martin Fowler, Domain-Specific Languages (2010) — how narrow languages narrow the gap between domain expert and implementation

Edwin Brady, Type-Driven Development with Idris (2017) — the rigorous end of specification: types as proofs