MLOps, AIOps, LLMOps, and GenAIOps

A Complete Guide for understanding *Ops in 2025

I’m Siddhesh, a Microsoft Certified Trainer, cloud architect, and AI practitioner focused on helping developers and organizations adopt AI effectively. As a Pluralsight instructor and speaker, I design and deliver hands-on AI enablement programs covering Generative AI, Agentic AI, Azure AI, and modern cloud architectures.

With a strong foundation in Microsoft .NET and Azure, my work today centers on building real-world AI solutions, agentic workflows, and developer productivity using AI-assisted tools. I share practical insights through workshops, conference talks, online courses, blogs, newsletters, and YouTube—bridging the gap between AI concepts and production-ready implementations.

Introduction

Artificial Intelligence is no longer confined to research labs—it’s powering business processes, customer experiences, and IT operations at scale. With this growth comes a new challenge: how do we manage, deploy, and operate AI systems reliably?

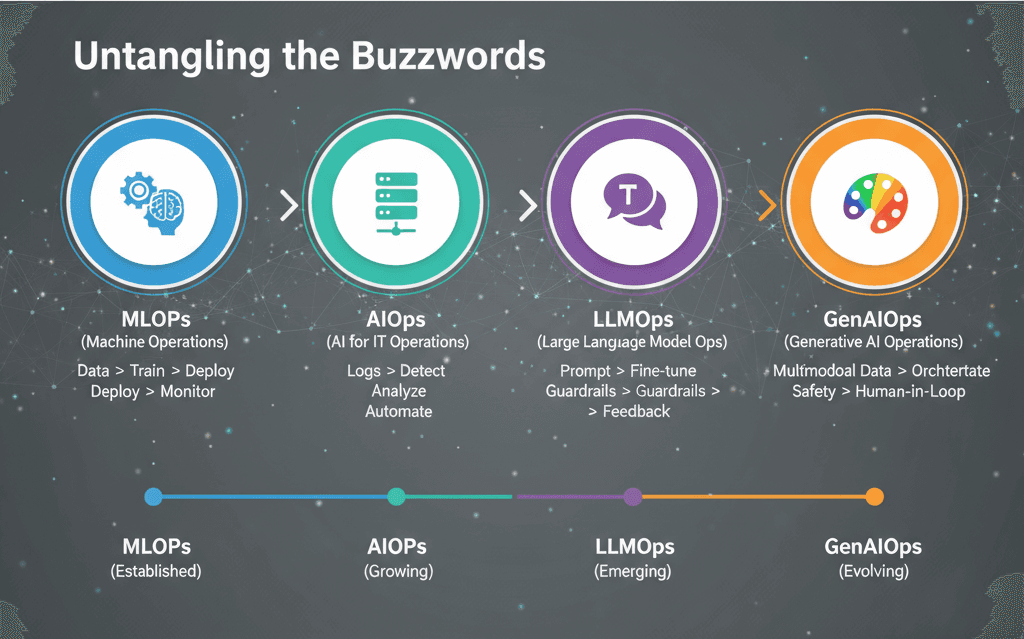

That’s where the world of “Ops” comes in. Over the years, we’ve seen terms like MLOps, AIOps, LLMOps, and now GenAIOps emerge. They sound similar but address very different problems. In this post, we’ll demystify them, compare their scope, and explore where they fit in the modern AI landscape.

1. MLOps – Machine Learning Operations

MLOps is the DevOps for machine learning. It focuses on automating the lifecycle of ML models:

Core Idea: Make model development, deployment, and monitoring as systematic as software engineering.

Pipeline: Data ingestion → model training → validation → deployment → monitoring → retraining.

Key Tools: MLflow, Kubeflow, Airflow, Vertex AI, Azure ML.

Use Cases: Predictive analytics, fraud detection, recommendation engines.

Think of MLOps as the backbone that keeps ML models in production reliable and scalable.

2. AIOps – Artificial Intelligence for IT Operations

AIOps is about using AI to manage IT operations. Unlike MLOps, which is about building AI systems, AIOps uses AI to improve system uptime, reliability, and efficiency.

Core Idea: Apply machine learning to logs, metrics, and events to detect anomalies, predict outages, and automate responses.

Pipeline: Data collection → correlation → anomaly detection → root cause analysis → automated remediation.

Key Tools: Dynatrace, Moogsoft, Splunk ITSI, Datadog.

Use Cases: Monitoring cloud infrastructure, detecting security anomalies, reducing false alerts.

Think of AIOps as an AI-powered IT assistant that keeps systems running smoothly.

3. LLMOps – Operations for Large Language Models

With the rise of GPT, LLaMA, and other large language models, we needed a new operational layer: LLMOps.

Core Idea: Manage the lifecycle of large language models in production—beyond traditional ML.

Pipeline: Prompt engineering → fine-tuning → deployment (APIs, agents) → monitoring (latency, hallucinations, bias) → feedback loops.

Key Challenges:

Handling huge model sizes & costs.

Guarding against hallucinations.

Monitoring prompt performance.

Ensuring data privacy and compliance.

Key Tools: LangChain, Guardrails, Weights & Biases, TruLens, Ragas.

Use Cases: Chatbots, copilots, content generation, summarization.

If MLOps was built for structured ML, LLMOps is designed for unstructured, generative, language-heavy models.

4. GenAIOps – Operations for Generative AI

GenAIOps takes things a step further—it’s not just about text-based LLMs, but the entire Generative AI ecosystem (text, image, audio, video, multimodal).

Core Idea: Provide governance, scalability, and responsible AI practices for all generative models.

Pipeline: Multi-modal data ingestion → foundation model deployment → orchestration with agents → safety guardrails → human-in-the-loop feedback.

Key Concerns:

Cost optimization (GPU-heavy workloads).

Safety and compliance (toxicity, bias, IP issues).

Orchestrating multi-agent systems.

Scaling multimodal models.

Emerging Tools: LangGraph, CrewAI, Semantic Kernel, AutoGen.

Use Cases: Enterprise copilots, creative content generation, multimodal assistants.

GenAIOps is still evolving, but it’s where enterprises are headed as they look beyond just text-based AI.

Comparison Table

| Aspect | MLOps | AIOps | LLMOps | GenAIOps |

| Focus | ML model lifecycle | IT operations automation | LLM lifecycle (prompts, fine-tuning) | Full generative AI lifecycle |

| Data Type | Structured, tabular | Logs, metrics, events | Unstructured text | Text, image, video, multimodal |

| Goal | Reliable ML deployment | Smarter, automated IT operations | Safe & effective LLM deployments | Scaling and governing GenAI |

| Maturity | Established | Growing adoption | Emerging | Early-stage, evolving |

The Road Ahead

MLOps will remain the foundation for traditional ML.

AIOps will grow as cloud and hybrid IT infrastructures get more complex.

LLMOps will become critical as more enterprises build on top of GPT-like models.

GenAIOps is the future—covering governance, safety, and orchestration across multiple generative modalities.

The bottom line: these aren’t just buzzwords—they represent the evolution of how we operationalize intelligence at scale.

If you’re a developer, start with MLOps concepts.

If you’re in IT, explore AIOps.

If you’re experimenting with GPT-like models, look at LLMOps.

And if you’re thinking about the future of enterprise AI, keep an eye on GenAIOps.

See you in the next post.